There are five senses: touch, taste, smell, sight and hearing. Computers have always been tactile – you have to touch them in order to use them (at least until relatively recently.) You can twiddle knobs, push buttons, punch keys, pull joysticks, drag mice… computer engagement through sight has also always been a thing, either through blinking lights or printouts or CRT tubes or LCD and LED panels.

The practical applications of a computer creating smells or tastes are somewhat limited (in a personal context; I’m sure there are computers making perfumes and soup seasonings somewhere, but that’s not what I’m thinking of) – there’s probably no need to smell your database search or taste your rocket trajectory.

But sound… computers were made for it!

Sound is ultimately mathematics. Even noise is not ’noise’, per se – it has component parts, each of which is there for a reason, and each of those reasons at its root has a formula of some kind: a hammer hitting a rock causes a specific sound, depending on the density of the rock, the type of metal in the hammer. A car crash is a symphony of thousands of sounds, each the result of some sort of impact. If you modeled a car and then simulated a crash, you could algorithmically recreate them, mixing them together appropriately depending on the point-of-view of the observer (or victim.)

Given that, music should be a piece of cake!

The first computer to play music was the CSIRAC, Australia’s first digital computer, in 1950. Mathematician Geoff Hill used the computer to vibrate a speaker, generating a 1-bit square wave. In 1951 the CSIRAC publicly played the “Colonel Bogey March”.

The first computer to play music was the CSIRAC, Australia’s first digital computer, in 1950. Mathematician Geoff Hill used the computer to vibrate a speaker, generating a 1-bit square wave. In 1951 the CSIRAC publicly played the “Colonel Bogey March”.

Some early personal computers (the Apple II, the ZX Spectrum, the IBM PC) would use the same process to generate audio, sending electrical pulses to built-in speakers. While you can make simple bleeps and bloops, playing more than one sound or tone at once is complex and the result is generally poor. But the method is simple and cheap, although it relies on the CPU to constantly ‘hit’ the speaker which has a significant cost in terms of processor usage.

But at least the computer made noise (unlike the Sinclair ZX80/81 or original Commodore PET, for example), so there was that.

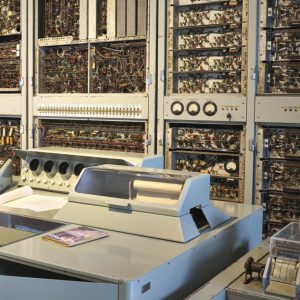

Computers would begin to make more sophisticated sounds in 1957, after engineer Max Mathews of Bell Labs wrote MUSIC I on a valve (vacuum-tube)-based IBM 704. MUSIC I was able to generate a simple triangle wave tone with no attack or decay control, only amplitude, frequency and duration – amplitude perhaps being the only advantage over the CSIRAC’s 1-bit beeper. (Americans seem to credit MUSIC with generating the first computer music and ignore the CSIRAC but as a Canadian I will have to side with the Australians on this one.)

However, by 1958 MUSIC had made some pretty serious leaps forward: running on the transistor-based IBM 7094-series, MUSIC II had four-voice polyphony and could generate sixteen different sound wave shapes. By the next year Mathews had added ‘unit generators’ – basically the ability to chain various bits of code (oscillators, filters, envelope shapers etc.) together using a syntax entered on a punch card (much like connecting various pieces of audio equipment together in sequence using patch cables).

MUSIC III could also take a musical score (on another punch card) and together (instruments and music) created a digital song on computer tape (after a while…) MUSIC IV was a rewrite co-written with Joan Miller and completed in 1963, and MUSIC V was rewritten once again in 1967 – this time in the FORTRAN programming language specifically for the IBM 360-series of mainframe computers. MUSIC V could run on any IBM 360 and, as Mathews convinced Bell Labs not to copyright the software (what did they pay him for? Seriously ) it spread far and wide.

In the 1970s people started hooking computers up to analogue synthesisers. The computers would electronically trigger the keys and control the various oscillators and other controls, but it could do so very rapidly, generating new sounds otherwise literally unheard of. A specialised computer, the Roland MC-8 MicroComposer designed by Canadian Ralph Dyck, was released in 1977 using the then-new Intel 8080 processor (the one used in the Altair). However it cost US$5000 (over US$20,000 today!) and so, few units were sold.

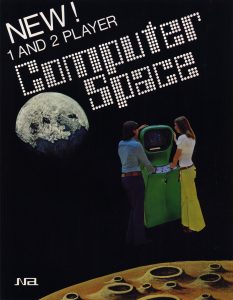

Six years earlier Nolan Bushnell and Ted Dabney had developed Computer Space, the first arcade video game, which had solid-state circuitry that generated several different sounds simultaneously – impressive considering it was the first. A year later Bushnell’s Atari released Pong, which had only simple bleeps and bloops but would be the first time many people would’ve heard electronically-generated sound directly from the oscillator’s output. But neither of these games were particularly musical.

Six years earlier Nolan Bushnell and Ted Dabney had developed Computer Space, the first arcade video game, which had solid-state circuitry that generated several different sounds simultaneously – impressive considering it was the first. A year later Bushnell’s Atari released Pong, which had only simple bleeps and bloops but would be the first time many people would’ve heard electronically-generated sound directly from the oscillator’s output. But neither of these games were particularly musical.

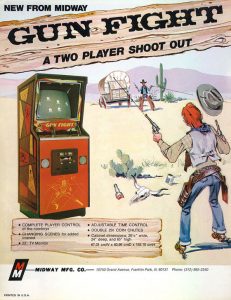

The first videogame music appeared in 1975. The western duel game Gun Fight featured a little death ditty. It wasn’t much and it was repetitive but it was music! The US version of Gun Fight was also the first arcade game to use a microprocessor (the 8080, making another appearance). Space Invaders (1978) was the first to have music during play, a four-note descending bass ditty that repeated over and over, increasing in pace as the aliens got closer.

The first videogame music appeared in 1975. The western duel game Gun Fight featured a little death ditty. It wasn’t much and it was repetitive but it was music! The US version of Gun Fight was also the first arcade game to use a microprocessor (the 8080, making another appearance). Space Invaders (1978) was the first to have music during play, a four-note descending bass ditty that repeated over and over, increasing in pace as the aliens got closer.

As the sound in videogames improved, those advancements immediately became what was expected by arcade players in successive games. The Atari 2600, released in 1977, marketed itself as a home arcade system, and came with a simple sound chip that allowed for some music, but unfortunately notes were programmed at intervals that substantially differed from those of the usual Western chromatic scale. As a result, melodies sounded strange and alien, and attempts to replicate tunes used in arcade games fell literally flat.

During this same period, home computers were beginning to emerge as a viable industry, their increasing manufacture (and that of arcade machines and consoles) driving the price of microchips down.

Atari would hope to redeem itself for the 2600’s terrible sound with its home computer line, released in 1979. Engineer Doug Neubauer designed its POKEY chip. It was capable of four channels of 8-bit tone resolution (or two 16-bit channels) and each channel could either be a square wave or noise. Unfortunately in four-channel mode, with only 256 possible tone values, some ‘notes’ were still a bit off! In two-channel mode, accuracy was much better. You could also add distortion to make notes sound ‘meatier’.

The POKEY wasn’t just used in Atari’s home computers – they used them in arcade games as well (including Centipede, Missile Command and Gauntlet).

Commodore realised that if it was going to compete with Atari it was going to need sound in its new low-cost computer. Commodore engineer Al Charpentier had designed a combination video and sound chip in 1977 intended to be used in a console competitor for the Atari 2600, but Commodore couldn’t find a buyer for the chip and had had no appetite to market their own videogame system, that being considered ‘off brand’.

So they used the chip, known as the VIC (Video Interface Chip) as the basis for the VIC-20. The VIC had three pulse-wave generators, each of which had a range of three octaves, and each an octave apart (giving a range of five octaves). It also had a noise generator, but only a single volume control for all of them. But that was enough for buyers of the VIC-20, which sold like hotcakes, becoming the first computer model to sell over a million units.

Across the pond, meanwhile, British company Acorn was developing a computer for broadcaster BBC known as the BBC Micro. It used the Texas Instruments SN76489 chip for sound, which had three square wave generators (with 16-bit frequency precision) at 16 different volume levels, and a noise generator. The chip was also used in Texas Instruments’ TI-99/4A, the Colecovision and the IBM PCjr.

The SN76489 was similar in design to the General Instrument AY=3=8910 chip, which was used in the ZX Spectrum 128, Amstrad CPC, the MSX family, and as part of a popular sound card for the Apple II called the Mockingboard.

Yamaha licensed the chip design and manufactured a variant of it known as the YM2149F. The variant was used in the 1985 Atari ST, whose beefier 16-bit processor could drive it quickly enough that four-channel digital sound was possible through it, although at nowhere near the quality of the Paula chip present in the Commodore Amiga, which featured four 8-bit PCM audio channels.

The Atari ST was always the poorer cousin of the Amiga in terms of digital sound, but made up for it somewhat in that, unlike the Amiga, it could still play 8-bit waveform-based music, which by that point had become quite sophisticated, composers using varied techniques to create a veritable orchestra of different instrument timbres.

Videogames arguably sounded ‘better’ on the Atari ST, despite the Amiga’s superior hardware. And for ‘quality’ music, the ST had built-in MIDI ports, which allowed for the connection of external sound generators and samplers such as the Roland Sound Canvas.

But we can’t finish a discussion of early computer music without talking about the king of sound chips, the Sound Interface Device, or SID.

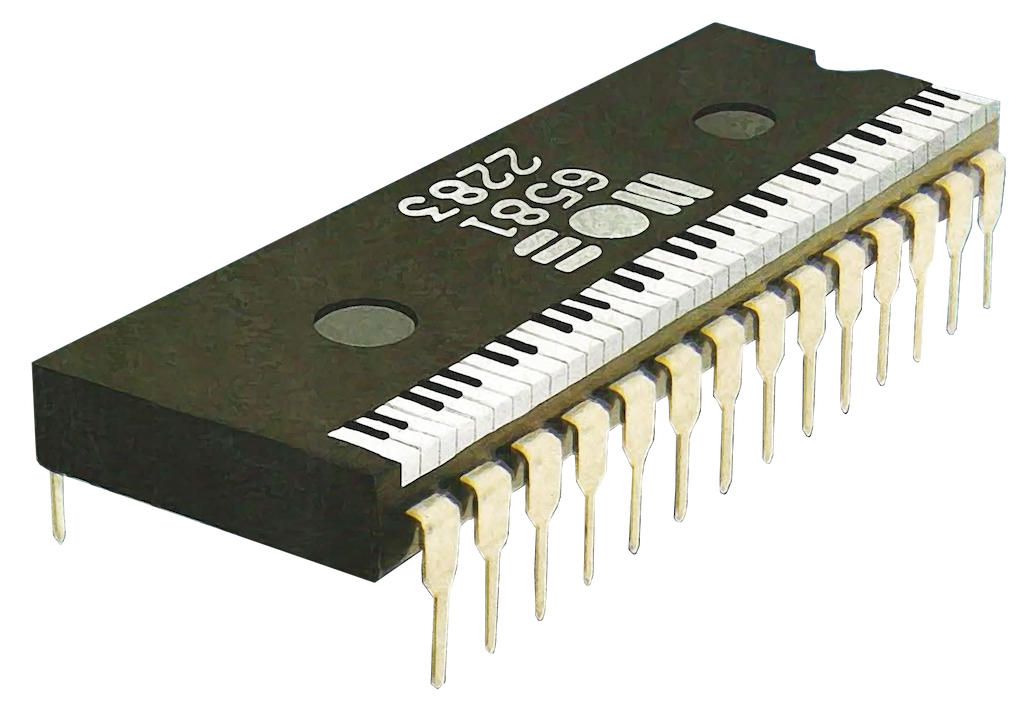

The pinnacle of the 8-bit music era was the SID chip. The MOS Technology 6581 Sound Interface Device was developed by engineer Bob Yannes. VIC chip designer Al Charpentier had recruited Yannes due to his knowledge of music synthesis – Bob was an electronic music hobbyist and had been infatuated with it since the early 1970s.

When the chipset for the Commodore 64 was being designed, he took on the task of designing its sound hardware. “I thought the sound chips on the market, including those in the Atari computers, were primitive and obviously had been designed by people who knew nothing about music.” Yannes was determined to design a high-quality instrument chip, with features common to contemporary synthesisers.

Although he only had five months to design it, the finished product was, according to colleague Charles Winterble, “10 times better than anything out there and 20 times better than it needs to be.”

Rather than working to a pre-determined set of specifications, the SID team developed the chip organically, adding features as they were practicable. Yannes had a wish-list, and his team managed to implement three-quarters of it before they ran out of time – which was quite the achievement given that the list of features was impressive for the time:

- Three oscillators each with an 8 octave range (16-4000 Hz)

- Four different waveforms (sawtooth, triangle, pulse and noise – other chips usually only used one waveform and had a single noise channel)

- A filter that could operate in low-pass (remove high frequencies), band-pass (remove high and low) and high-pass (remove low) modes.

- Three ASDR (Attack, Sustain, Delay, Release) envelope generators, which allowed for customised volume shaping.

- Three ‘ring modulators’ capable of multiplying two waveforms together, creating a distinctive third.

- Two analog-to-digital converters used for paddles or mice.

All of these features allowed for the construction of extremely sophisticated timbres at a wide range of frequencies, and resulting in music that was unlike anything anyone had heard out of a home computer (or videogame console) before.

After all, comparing the SID to contemporary sound chips such as the AY-3-8910 was like comparing the Mona Lisa to a puddle of paint on a sidewalk – sure, paint is involved in both of them but there’s an obvious discrepancy in terms of sophistication and quality.

Videogame music composers embraced the SID with relish, quickly discovering undocumented ‘features’ such as the ability to modulate an unintended ‘click’ that occurred when the volume register was altered in order to play back 4-bit (16 level) digital audio samples – this technique was used in games such as Ghostbusters and Impossible Mission to reproduce speech, and in Arkanoid to replicate musical instruments.

But the SID wasn’t just used for videogame tunes – the wide variety of instrument timbres that could be produced by it also made it attractive to those seeking to write music for its own sake, in a wide variety of genres from jazz to classical to rock-and-roll.

To be friendlier for traditional music composers, many of these applications used musical notation to enter tones, for example Will Harvey’s Music Construction Set. These programs usually offered a fixed set of instruments that could be chosen from.

After the introduction of SoundTracker on the Amiga, which processed notes using a ‘piano roll’ paradigm, similar “trackers” began to appear for the Commodore 64, including Cybertracker. Trackers bridged the gap between traditional notation and complex direct configuration of the SID chip’s registers, allowing for both more rapid and straightforward entry of music. Trackers gave users the power to create sophisticated instruments without needing to know the intricacies of the SID and the 64 to do it.

So, what if you want to create music with the SID today? Modern music programs include SID-Wizard, a music tracker, and MSSIAH, a cartridge that contains a MIDI port and a number of software packages including a sequencer and a drum machine.

There’s also the Cynthcart, which turns the C64 into a synthesiser you can play live, using a MIDI keyboard (or the C64 keyboard, if you’re really desperate!)

In any case, it wasn’t long before the SID was recognised as the gold-standard of 8-bit (and 16-bit for that matter) computing sound hardware, and this helped to propel sales of the Commodore 64, which eventually became the most popular home computer model of all time.

As for Yannes? He went on to found Ensoniq along with Charpentier and several other former MOS Technology engineers. Ensoniq designed a number of keyboard synthesisers such as the Mirage DSK-1 and the ESQ-1, which were valued for their ‘warm’ sound. Ensoniq designed the sound chip used in the Apple IIGS, and also designed a number of computer sound cards, including the Ensoniq Soundscape.

Be the first to comment